Every physical structure in the Universe is described by space and time. Similarly, an algorithm’s efficacy can be determined by its Space and Time complexity. Although we all know there are many ways to solve a problem in programming, understanding how the algorithm functions effectively will help us improve our programming skills. Knowing how to determine a program/effectiveness algorithm using Space and Time complexity will cause the program to behave in the necessary optimal conditions, making us more efficient programmers.

Let us concentrate on Time complexity in this post while we save room for understanding Space complexity in the future. Time is indeed money. In this article, you’ll learn how to test a program based on Time complexity and a gentle introduction to the Time complexity of an algorithm.

Table of Contents

Time Complexity Definition

The amount of time it takes an algorithm to run as a function of the length of the input is known as time complexity. It calculates how long each statement of code in an algorithm takes to execute.

What is Time Complexity?

The amount of time it takes an algorithm to run as a function of the length of the input is known as time complexity. The length of input indicates the number of operations that the algorithm can perform. This paints a clear picture of what Time Complexity is trying to tell us. It will not consider the average execution time of an algorithm. Rather, it will provide information on the difference in execution time (increase or decrease) as the number of operations in an algorithm grows or shrinks. Yes, as mentioned in the summary, the time it takes is solely determined by the length of the input.

To clarify, time complexity refers to the amount of time it takes for each declaration of code in an algorithm to be executed. When a statement is set to run repeatedly, the number of times it is run is equal to N multiplied by the time it takes to run the function each time.

What are the Different Types of Time Complexity Notation Used?

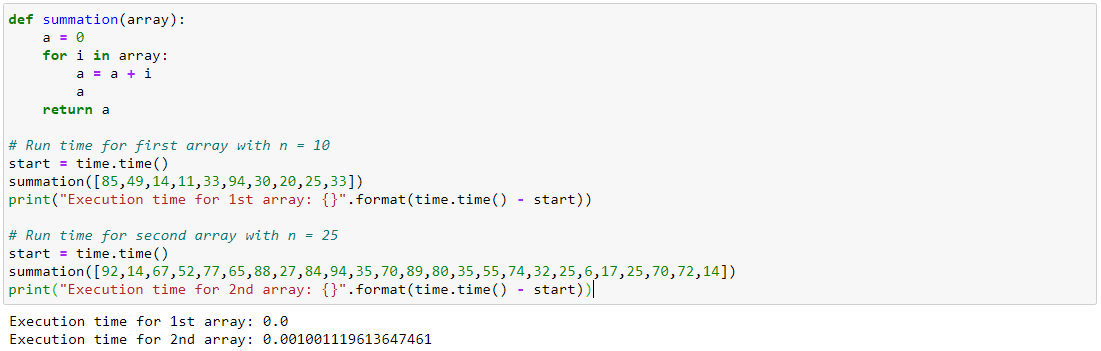

Time complexity is defined as time as a function of input length, as we’ve seen. Furthermore, there is a time-dependent relationship between the size of the input data (n) and the number of operations performed (N). The order of growth in Time complexity is denoted by the notation O[n], where O is the order of growth and n is the input length. ‘Big O Notation is another name for it.

By defining the N number of operations that are performed on an algorithm, Big O Notation expresses its run time in terms of how quickly it grows relative to the input ‘n.’ As a result, an algorithm’s time complexity is defined as the sum of all O[n] assigned to each line of code.

There are different types of time complexities used, let’s see one by one:

Constant time – O (1)

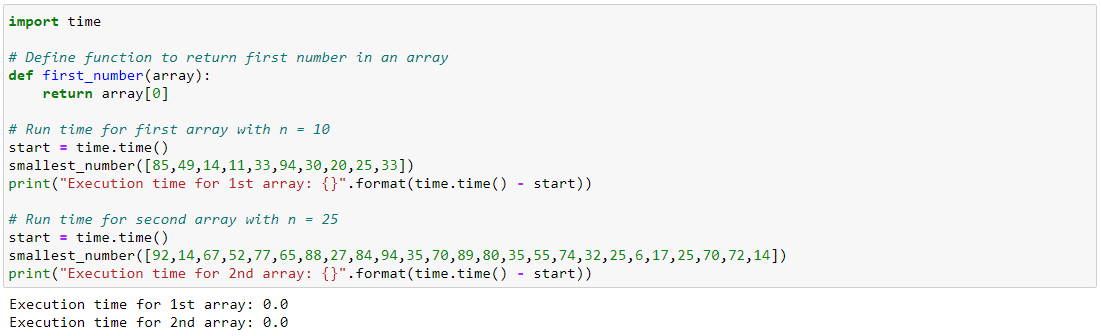

When an algorithm is not based on the input size n, it is said to have constant time with order O (1). The runtime will still be the same, regardless of the input size n.

Linear time – O(n)

When the running time of an algorithm increases linearly with the length of the input, it is said to have linear time complexity. When a function checks all of the values in an input data set, it is said to have a Time complexity of order O(n).

Logarithmic time – O (log n)

When an algorithm reduces the size of the input data in each step, it is said to have a logarithmic time complexity. This means that the number of operations is not equal to the size of the input. As the size of the input becomes larger, the number of operations decreases. Binary trees and binary search functions are examples of algorithms with logarithmic time complexity. The search for a given value in an array is done by breaking the array into two parts and beginning the search in one of them. This ensures that the operation isn’t performed on every data variable.

Quadratic time – O (n^2)

When the running time of an algorithm increases non-linearly (n^2) with the length of the input, it is said to have a non-linear time complexity. In general, nested loops fall into the O(n)*O(n) = O(n^2) time complexity order, where one loop takes O(n), and if the function includes loops inside loops, it takes O(n)*O(n) = O(n^2).

Similarly, if the function has ‘m’ loops, the order is given by O (n ^m), which is referred to as the polynomial time complexity function.

How to Evaluate an Algorithm for Time Complexity?

We’ve seen how each function is given an order notation, as well as the relationship between runtime and the number of operations and input size. Now it’s time to learn how to calculate the total run time needed to run an algorithm for a given n by evaluating the Time complexity of an algorithm based on the order notation it receives for an operation and input size.

Let us look at how to determine an algorithm’s Time complexity.:

The algorithm is as follows:

- Given two input matrices, each of which is a square matrix of order n

- Using the np.random function, the values of each variable in both matrices are chosen at random.

- Initially, a result matrix of 0 values in the same order as the input matrix was allocated.

- Each X element is multiplied by each Y element, and the result is stored in the result matrix.

- After that, the result matrix is transformed into a list form.

- For each factor in the result list, the total is added to arrive at the final answer.

Time Complexity of Sorting algorithms

Understanding the time complexity of sorting algorithms aids us in selecting the most appropriate sorting technique for a given situation. The time complexities of some sorting techniques are listed below:

Insertion Sort Time Complexity:

Insertion Sort has an O(n) time complexity in the best case. In the worst-case scenario, the time complexity is O(n2).

Merge Sort Time Complexity:

On all types of instances, this sorting method has stable time complexity. In the best case, Merge Sort has an O(nlogn) time complexity. In the worst-case scenario, the time complexity is O(nlogn). This is because Merge Sort uses the same number of sorting steps in all situations.

Bubble Sort Time Complexity:

The time complexity of Bubble Sort in the best case is O(n). In the worst case, the time complexity is O(n^2).

Quick Sort Time Complexity:

Fast Sort has an O(nlogn) time complexity in the best case. The time complexity is O(n^2) in the worst case. Due to its efficiency of O(nlogn) in the best and average cases, Quicksort is considered the fastest of the sorting algorithms.

Time Complexity of Searching Algorithms

Let’s look at the time complexity of some search algorithms to see which one is the fastest.

Time Complexity of Linear Search:

Sequential access is followed by Linear Search. In the best case, the time complexity of Linear Search is O(1). The time complexity is O(n) in the worst case.

Time Complexity of Binary Search:

The binary search algorithm is the faster of the two. However, linear search performs better for smaller arrays. In the best case, the time complexity of Binary Search is O(1). The time complexity is O(log n) in the worst case.

Space Complexity – A Note

You’ve probably heard of the word ‘Space Complexity,’ which comes up often when discussing time complexity. What is the concept of space complexity? Any algorithm, after all, necessitates working space or storage. It is proportional to or directly depending on the amount of input the algorithm receives. To determine space complexity, simply measure the amount of space taken up by the variables in an algorithm. The algorithm runs faster when there is less space. It’s also important to understand that time and space complexity are unrelated.

Conclusion

We covered the fundamentals of time complexity in this article, as well as the importance of using it in our algorithm design. We also learned how to assign the order of notation for any algorithm based on the cost function and the number of times the statement is defined to run, and we learned how to the order of notation for any algorithm based on the cost function and the number of times the statement is defined to run.